We know Artificial Intelligence (AI) is coming, we see the Internet of Things (IoT) happening.

We know trains, planes and automobiles will become autonomous. This is not news. We know data is key to modern industries, we know robots will communicate, we understand and accept securing all of this will be a nightmare. The consequences of failure could be cataclysmic. I will refrain from inserting the obligatory terminator graphic here.

We also know that companies, projects and devices need to not only communicate, but they also need to share information securely. This is another issue. If nobody, including the NSA, GCHQ, Governments or large tech companies can secure the information, who can? Not only that, but the holder has a wee bit more power than they should, especially if they control access. If it’s given to third parties to control, then it gets much worse.

We need a way to share information securely and occasionally privately. This data cannot be blocked, damaged, hacked or removed from the participants whilst they are in the group. The group must decide as a group on membership, no individual should have that authority. No administrators or IT ‘experts’ should have any access to a companies critical data.

Potential Solution

With the SAFE network developers have a ton of currently untapped capability. Here I will describe one such approach (of many) that will allow all of the above systems to co-operate securely and without fear of password thefts and/or server breaches.

SAFE has multisig data types (the machinery is there, but not yet enabled), these data types are called Mutable Data (MD) and can be mutated by the owners. A possible solution quickly becomes obvious.

A conglomerate starts with one entity, this could be a person or a company. Lets call that entity ‘A’. Now ‘A’ creates a Mutable Data item that represents the conglomerate, which we will call ‘C’. ‘C’ is a mutable data type, this is a type with a fixed address on the network. It has a fixed address, but no fixed abode, so it appears on different computers at different times. Only the network knows where the data is. This ‘C’ then can be almost anything. Some examples might be:

- A list of (possibly encrypted) public keys that represent each participants ‘root key’.

- A list of (possibly encrypted) participants.

- An embedded program

- Any data at all (endless opportunities really)

Lets take a few cases of applications for different industries. Note that this is not specific application for these industries, it’s to facilitate global collaboration. Below is a snapshot of some possibilities, but even these are likely to be superseded by more innovative and better thought out approaches. However, these examples work. Each industry will later feature in a more detailed analysis.

1: The Autonomous Vehicle Industry

Photo by Alessio Lin on Unsplash

These vehicles should obviously communicate with one another and indeed there are moves to enforce collaboration. However there’s the problem of ownership as described above, but also an issue of corruption by the industry, such as altering documents and data (to match emissions targets for example).

Potential Solution

A simple solution here (which will repeat in many cases), involves the creation of a fixed network address (via a Mutable Data item in SAFE). This will be created by a single entity, say Ford for example. To collaborate they add GM, Tesla etc. as ‘owners’ of this item. With multisig this means ownership is the majority of ‘owners’. Now we have a data item on a network that is not owned by any of the companies involved, but editable by the majority of those owners.

With each ‘owner’ the data item may contain a list of Public Keys or Companies and this list allows us to validate items signed by those keys as valid from the perspective of this conglomerate of companies. So what can the companies do now?

The companies can now create their own data types, for example, another mutable data item that contains a list of manufactured vehicles public keys. Now any vehicle added to the company list can be confirmed valid by any other vehicle (or system) and the vehicles or systems can now communicate safely, and in a way that is cryptographically guaranteed.

The communications may now be encrypted between vehicles and may even include micro-payments for services such as shared charging in the future.

2: Robotic & AI Industries

Photo by Alex Knight on Unsplash

Similarly to the above mechanism (although there might be no conglomerate or top level group involved), it would be simpler if robots followed a common route to information sharing. In this section when the reader reads ‘robots’ they must think of robots or AI networks.

The interesting thing here will be the potentially enormous quantity of information that robots could share globally. Imagine for instance that a robot maps out a room or learns CPR, or perhaps even the location of a good repair shop. Would it not be incredible (and obvious) if they could share that information securely and immutably with all the other robots across the globe?

Robots like humans will likely not know everything at all times, but instead wish to be able to tap into a much larger data set than they can hold. Importantly though they would want access to a more recently updated data set than they could hope to achieve singularly. Yes, even robots will not be omnipotent: like other species they will continue to be limited by natural laws to some degree.

There are several projects looking at ways to enable information sharing between robots, such as the recent(ish) EU project robot earth. But how do the robots know the data is from one of them and not a corrupt source? This surely will be important?!

So, here we wish robots to be sure of identity and - as with vehicles - have the ability to revoke (invalidate) such identities, or possibly even remove recent posts from the collective.

Potential Solution

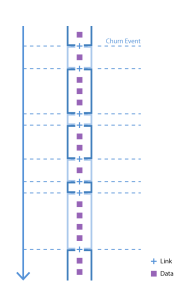

A robot manufacturer can create a mutable data item. With this item each robot built will create a key-pair and the public key will be added as an owner to the Mutable Data (this can be made into a tree structure to hold billions of identities). The data element will be the location of further lists of valid robot keys, these may also be Mutable Data.

The secondary Mutable Data items above will contain many more robots keys. Each of these data items can now be thought of a mini-community. In these communities a delegate can be chosen to be one of the owners of the core Mutable Data item (i.e. the conglomerate one as above). This delegate will vote as per the groups instructions and failure to do so will mean the group (all owners) will remove that robot from the group and the community as a whole.

Now we have a mechanism where billions of robots can recognise the public keys of their peers. they can do this via any key exchange (ECDH etc.), or by using asymmetric encryption keys so they can encrypt data and communications between themselves. They can confirm the validity of any data posted (cryptographically signed by a robot) and that it is behaving as expected by the rest of the robots.

Now we have the ability for robots to learn and post results on a secure autonomous network. AI network can share neural networks suited for specific tasks, general AI may be aided in such scenarios with multiple networks that may be accessed globally and will not be able to be corrupted, whilst remaining validated at all times (many AI bots could rerun the network to prove results are legitimate). Re-enforcement learning and neuroevolution bots could share experiences from similar but not identical findings of the real world.

It could prove to be rather exciting to dive deeper into further posts. It is the sort of thinking that drove the need to create SAFE in the first place. The fact servers are unnatural and illogical is bad, but their susceptibility to corruption is much worse. These mechanisms require incorruptible networks, or at least the ability to recognise and control bad information. We need secure autonomous networks for some really exciting collaboration and advances. I would love to investigate and debate these opportunities with a wider audience. It’s not possible without this autonomous network though. This is why I’m so excited by the prospect of SAFE.

3: Healthcare industry

Photo by freestocks.org on Unsplash

Interesting thing about scanners and medical devices is that they don’t need to know your name!

A scanner cannot make use of your name. Today we can, at exponentially decreasing costs, decode your whole genome. Scanners for home use (see X prize tricorder prize) will improve dramatically and at increasing pace with significant cost savings.

Here’s a thought, instead of protecting medical records, why not just remove them completely?

Here’s another thought, instead of building more hospitals and staffing them, could we not let technology help, especially with ageing populations and more life extension programs working efficiently. Hospital/staff shortage could be offset with much better tools to do the job, by this I mean build testing equipment and use genomics and proteomics to reduce the need for hospitals and staff. The answer to hospital shortages, could well be to remove almost all of them, except for trauma centres and centres to provide assistance, such as maternity units etc.

With some of these ideas we could look to revolutionise healthcare and increase the health of the population with dramatically decreased budgets and much less human error. We could become much more caring and able to look after those who need help, and at the point of need, which should be the point of diagnosis and immediate delivery of solutions. With home diagnosis equipment it’s quite likely that in the future your devices could detect disease faster than you realise your antibodies are responding to it. Imagine never feeling ‘under the weather’ again?! Of course there will always be reasons to feel a bit down some days and up on other days, the human experience will always be relative to its context.

You can probably tell that I have a bit of a ‘bee in my bonnet’ on this one. It frustrates me to see people refused care, or drugs not being used due to cost. We can do better. If we could diagnose, deliver care and medication at home (ultimately we might be able to fix our own problems ourselves) then we can potentially revolutionise healthcare for everyone.

We now have the solutions above for the autonomous vehicles, robotics and AI behind us. Those tools are in our arsenal and we can proceed from here. This is rather exciting to me and a huge win for humanity. So we know measurement devices do not know our name, we know that accurate scans can tell a lot about our current physique and detect anomalies. We know that genetic sequencing can accurately map our physical makeup and detect anomalies there. This means we could actually achieve a goal of instant medication and resolution to problems, but how do we get from here to there?

Potential Solution

We can already see how medical devices, like robots, can communicate with each other on an autonomous network such as SAFE. These devices can measure many things, including DNA, genome, fingerprints and much more. Therefore, they can link scans to identities, but they should see each ‘thing’ they scan as a unique set of atoms that are (hopefully) all connected correctly with a genome to ascertain its health. As a patient is scanned any anomaly will be treated. The treatment should be supplied or recommended by the scanner. When the treatment is applied, the scanner will scan again and be able to remember the condition and the treatment.

This gives the scanners a view of what works and what does not for the huge numbers of unique humans they’ll deal with. In short, the scanners can test treatments and measure outcomes. With that information and the sharing mechanism described above in the AI scenario, they can take the findings from the whole human population to ensure the best possible treatment, based on massive sampling. In this manner the scans of a problem can be combined with the relevant DNA/RNA strand to relate the scan to others of similar composition. That should mean treatments are continually improving. This continual improvement not only makes us all healthier with improved longevity, but also dramatically reduces the health budget. Hopefully that would mean health becomes universal, breaking down the gap between rich and poor, people and countries.

Conclusion

This post has attempted to give a tiny (minuscule) glimpse into the possibilities of autonomous, connected, non-owned data and computation capabilities. Many types of machine, intelligence and future invention could collaborate simply, freely and without corruption. There would be an initial requirement for humans to start such systems, but they should quickly become self-managing. This will mean there’s quickly zero requirement for human intervention, possibly apart from the Engineers who will provide updates as technology improves. Another post though will investigate this particular feature of autonomy in reference to data networking and beyond.

I hope this has been a useful exercise, or at least will help to probe the imagination and let us look at problems from a different perspective. In a secure and autonomous data network though, many of today’s issues simply evaporate. I certainly hope this post has challenged some people to think differently.